TLDR:

- BirdDog NDI encoder-originated streams do not synchronise on OBS unless Low Latency Mode is selected.

- Low Latency Mode is a hacky workaround for a BirdDog encoder issue.

- The above hack results in frame glitches and audio distortion.

- The above is all a result of BirdDog encoders not using network time synchronisation, but it doesn’t help that obs-ndi also doesn’t make use of the NDI Frame Synchronizer facility.

- BirdDog Flex 4K Encoders supply enough power through their DC out port to power small bridge cameras, like the Panasonic FZ2500, but not bigger cameras like the Sony PMW-Z150.

- BirdDog Encoders are buggy and have a rushed-feel to them.

Main Story:

My first multicam shoot was around 12 years ago – and I was a camera operator (as opposed to an equipment supplier, mixer operator or technician). We used a Panasonic mixer, I think it had 4 video channels, lots of different wipes/transitions and it took composite video feeds. It wasn’t great but it was fun.

Fast forward another 6 years and Blackmagic Design had introduced affordable SDI-based mixers. Fortunately our cameras were all SDI capable and so the switch to SDI was swift and hugely enjoyable. Suddenly we had beautiful, uncompressed full HD, no hum bars, no sadness.

Then, about two years ago, I came across the SlingStudio system, which was FANTASTIC, but had a whole bunch of limitations. I wrote about this previously, but in a nutshell the SlingStudio system is awesome provided your project fits within a very narrow set of criteria.

Things have moved on though and as I write this I am now trying to make the jump into the wonderful world of IP-based video switching, oh man, what a thrill.

My company was doing live streaming stuff on occasion before the Corona Virus hit. In fact for years before the Corona Virus pandemic I *begged* multiple clients to allow me to stream their productions. Some embraced the technology as a means of marketing themselves as tech-savvy, others rejected the proposal outright and some saw the technology as a way of connecting with clients and investors. Unfortunately the latter was the minority.

Of course Wuhan happened and now _everything_ is live streamed. Enter NDI and specifically BirdDog, an Australian company (mmm de ja vu/Blackmagic Design) that produces very affordable IP video encoders. Seriously, on paper these things RAWK. They’re affordable, they take POE (Power over Ethernet), they’re IP based, they accept any video signal up to 4K, they support PTZ cameras and most excitingly they can output 12V DC @ roughly 15W. This is better than what I was looking for.

BirdDog NDI encoders are in very short supply currently (Nov 2020 -> March 2021), but by scrounging around on the web I was able to acquire encoders necessary to perform an upcoming shoot. I landed up buying a combination of new and used encoders from 4 different vendors from all over the world.

The BirdDog Flex 4K encoders are really small and fit beautifully on top of my go-to live streaming cameras, namely the Panasonic FZ2500. I suspected that the encoder’s DC output would be good enough to power the camera, and I was right. One ethernet cable with POE (52V) is sufficient to drive both the encoder, camera and comms… this is SO COOL.

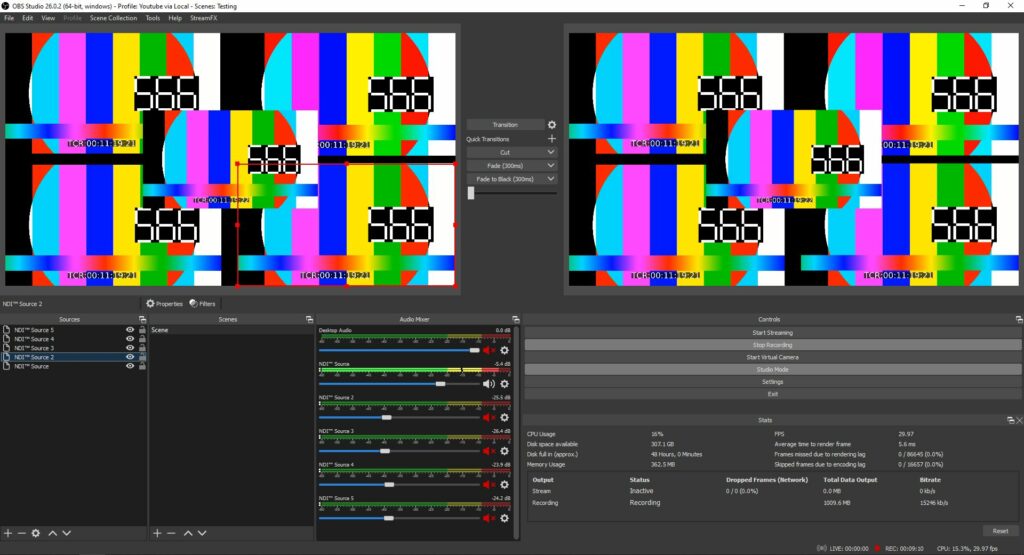

A ton of testing was undertaken and things were looking really good. Pictured above is a 24-port Mikrotik Router+Switch, with auto passive and active POE on every ethernet port. Attached are some cameras. An OBS instance, off-screen, is ingesting the feeds and compositing them into a single frame, which is then being output to a BirdDog Mini in decoder mode and being displayed on the monitor.

Things were stable and it seemed like a good time to try out the system on a simple upcoming job, which consisted of a camera feed and a laptop feed.

The shoot went really well, the quality was excellent. A small five-port POE switch provided power and data to devices and the OBS NDI output functionality (via obs-ndi) worked well for previews and duplicating presentation machine feeds to comfort monitors.

It was time to try something more ambitious: an offline, shoot for live, using 5 cameras with 3 camera operators, comms and a comfort monitor. Unfortunately I don’t have any photos of this for various reasons.

The structure of this shoot was as follows:

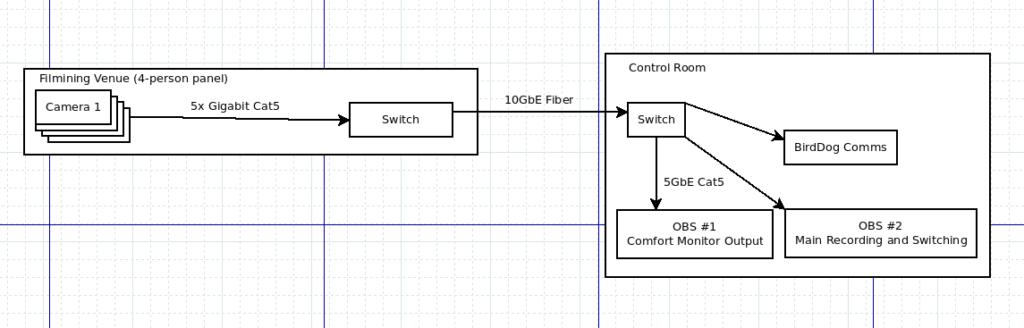

- A 24-port Mikrotik router and switch was positioned in the main venue. 6 NDI devices connected to this switch, namely 5 cameras (wide, mid and two close shots, as well as one camera purely for audio). An additional BirdDog Mini was used as a decoder for the comfort monitor.

- A 10 Gigabit fibre link from the 24-port switch to a smaller 5-port 10GbE Mikrotik switch.

- Three Windows (meh, not Linux) laptops, were connected to this switch. One OBS instance handled the comfort monitor and video playback. It output pre-recorded video content via NDI to both the comfort monitor and an OBS instance running on the second laptop.

- The second laptop switch between the NDI camera sources as well as the video playback laptop and recorded the result to a file. This was a “deferred” live stream, which was transmitted the following day.

- One laptop had a Caldigit 10GbE Thunderbolt 3-based NIC, the other had a 5GbE USB3 Kensington ethernet NIC. Pricing for 10G, 5G and 2.5G is near exponential in nature and for HD streams a 2.5G adapter is more than adequate.

- Finally, a third Windows laptop was running BirdDog’s Comms Lite application. This application requires that two send groups be configured in the NDI Access Manager. A requirement which isn’t obvious and is documented in a difficult-to-find section of Birddog’s Knowledgebase. Regardless, BirdDog’s Jon was quick to respond when I asked them for help. Also, if you bought the BirdDog headsets while they were still for sale, you must charge them using the micro-USB port before use. If you don’t the microphone won’t work (this really screwed me a bit, also not documented anywhere).

So we were sorted; multiple high-performance laptops, 10 and 5 GbE ethernet interfaces, 10GbE backbone, etc. The encoders were all configured for 120 mbit/sec. All camera feeds were ingested by both OBS machines and proxies were ingested by BirdDog Comms Lite (which shows previews).

Problems:

- The first issue was stuttering. Our cameras were configured for 1080p @ 30fps, which on the wire is actually 29.97 fps. We were using 2x Panasonic FZ2500s (awesome cameras), 1x Sony FZ150 ENG-style camera and a Sony A7s MkII.

- If you set the BirdDog NDI encoders to “Auto” and they don’t have a signal on the HDMI port, OBS will crash. If you set the encoders to a specific framerate and resolution you won’t have this problem.

- When set to AUTO, the encoders usually detected the incoming framerate at 59.94, which I don’t believe it was but this reduced the stuttering. The next thing that further reduced the stutter was configuring OBS for a canvas framerate of 29.97 fps.

- At this point stutter had pretty much been eliminated, yay.

- The audio and video being rendered on the comfort monitor via the BirdDog Mini decoder (playing the feed from one of the OBS instances) was out of sync by around 400ms. I don’t know why.

- During the production, two of the camera feeds went severely out of sync, by as much as 2 seconds. Restarting the sources in question (by changing one of their properties) temporarily fixed the sync.

- The audio occasionally stuttered (momentary glitch every 4-10 minutes).

- The audio was severely distorted and amplified compared to what the camera’s VU meters were indicating.

Unfortunately, one could describe the above as a mild f*ck up. Luckily we weren’t live and had backup recordings of everything.

Before we head into the sync side of things it’s useful to have a broad overview of how OBS works and specifically how OBS synchronises streams. In short, OBS uses frame timestamps to determine when to render a frame. Timestamps are therefore extremely important for syncing. A new frame is requested in OBS (on a timer, a tick) and OBS goes, per source, “is the source’s current frame newer than the last frame we rendered AND has the frame’s time elapsed relative to the system time, if so, render the frame, otherwise keep the last good frame”.

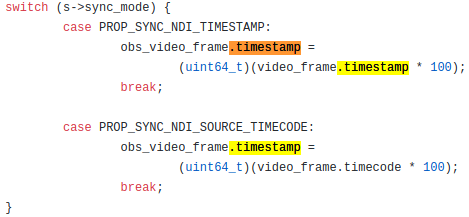

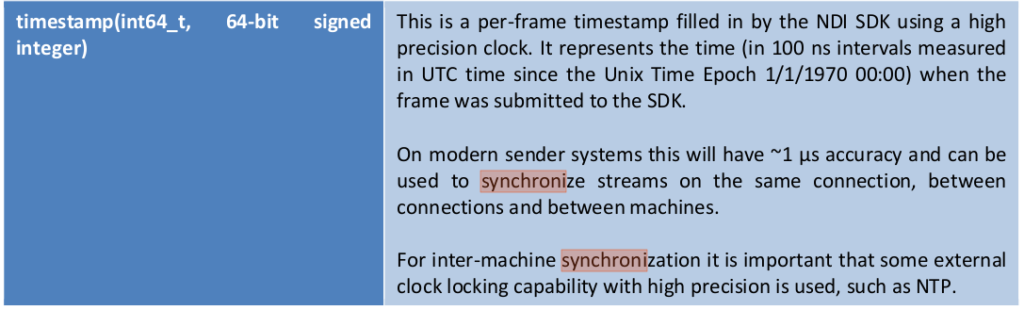

The NDI SDK, which is a fantastic document, details two sync modes, namely source and encoder sync. OBS-NDI maps source sync to “Source Timing” and encoder sync to “Network Timing”. If your cameras emit timecode and they’re synced, “Source Timing” should work well… but my cameras are cheap, so I don’t have this option.

The next option is Network Timing/encoder sync. In this case the encoder uses it’s internal clock (aka wall clock) to timestamp the outgoing frames. This timing data is accurate to 100ns in terms of resolution. This sync method is only useful if the clocks on your encoders are synced. BirdDog does not include any kind of synchronisation method on their encoders – so this doesn’t work. In fact this is super annoying because further analysis demonstrates that they’re using an off-the-shelf distribution of Linux which can easily include an NTP client. More on this in the teardown post.

The last sync mode is “Low Latency” mode, which essentially renders frames on receipt, immediately. This is a hack because it can result in frame stuttering and distorted audio.

In order to test syncing I started by acquiring a super cheap Chinese HDMI splitter.

In concert with another splitter I was able to duplicate one HDMI signal to 5 BirdDog encoders, which consisted of 4x HDMI Flex 4K Ins and 1x BirdDog Mini.

On an oldish Linux laptop (Ubuntu), I ran an ffmpeg/ffplay combo command which outputs a test video pattern as well as burnt in timecode, down to the frame. I also included a “beep” file, which outputs an auditory beep every 5 seconds (5 seconds on, 5 seconds off). Below is the command:

ffmpeg -f lavfi -i anullsrc=channel_layout=stereo:sample_rate=44100 -filter_complex "amovie=intermittenbeep.mp3:loop=0,volume=0.8,asetpts=N/SR/TB[bg];[bg][0]amix=duration=shortest" -f lavfi -i testsrc=duration=36000:size=1920x1080:rate=30 -vf drawtext="fontsize=15:fontfile=/Library/Fonts/DroidSansMono.ttf:\

timecode='00\:00\:00\:00':rate=25:text='TCR\:':fontsize=72:fontcolor='white':\

boxcolor=0x000000AA:box=1:x=860-text_w/2:y=960" -c:v prores -pix_fmt yuv422p10 -f mov -movflags frag_keyframe+empty_moov - | ffplay -fs -i -The font file above isn’t important as ffmpeg will default to an available font if it can’t find the file specified.

As can be seen above, on a high speed network, even on gigabit ethernet, with synthesised timestamps OBS renders the feeds exactly in sync (for video at least). Audio is still distorted though. My solution to this is to use Audinate’s Dante AVIO as the audio carrier and Ardour DAW software as the mixer/recorder. I’ve confirmed this works well in practice.

NDI Sources must be configured to use Low Latency mode.

If you’d like a slightly deeper dive into BirdDog Encoders, click here.

Update February 2021:

BirdDog Encoders indeed run a modified version of Ubuntu Linux, complete with package manager. They are in fact fully-fledged computers capable of running a wide variety of software. Yes, it’s possible to run Spotify on a BirdDog Encoder, not that you’d want to. This is important because it is actually very easy to install a network time synchronisation client on these encoders. I detail this in my BirdDog Custom Firmware post. Critically, with the NTP client installed, the encoder clocks synced and obs-ndi set to “network timing” mode, everything syncs perfectly. Such a simple fix, one line and it works. BirdDog regularly blames OBS for sync issues instead of fixing the problem on their side.